Create a cluster using the Cloud Console

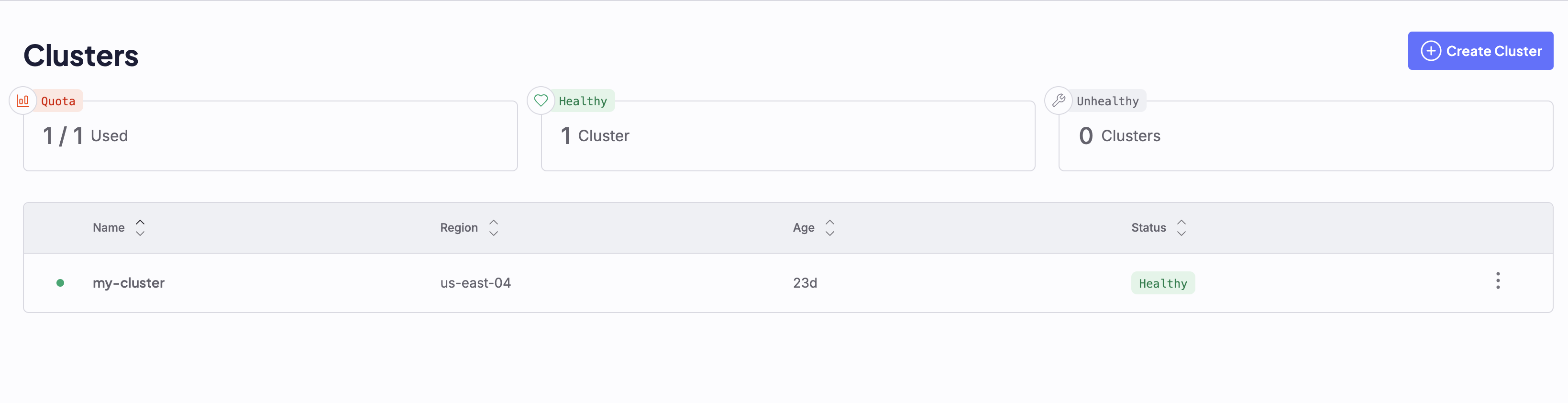

To create a new CKS cluster using the Cloud Console, open the Cluster Dashboard. From here, you can create new CKS clusters, or view and manage deployed ones. If you do not yet have any clusters, this dashboard will be empty:

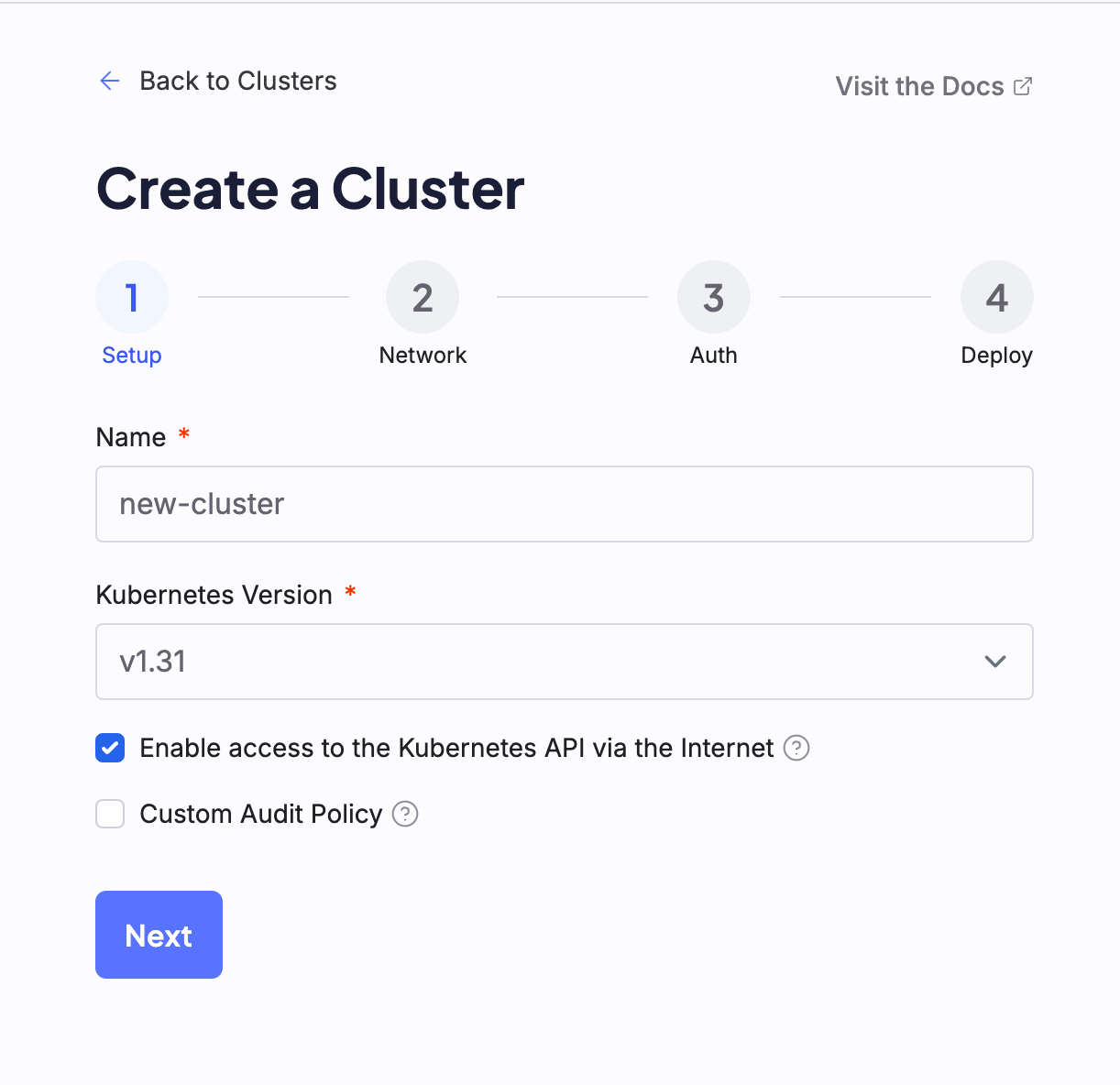

1. Setup

First, give your cluster a name. It’s best to reflect location in the name so clusters stay organized at scale. Here are some guidelines:- Keep names short and put the location first so that they group together naturally in reports.

- Only use lowercase letters, numbers, and hyphens to keep names friendly for URLs and automation.

- Avoid mutable details like Kubernetes version, Node Pool sizes, or temporary attributes that may change.

[short_name] is a concise descriptor of an environment, lifecycle, or workload:

use04a-[short_name], such asuse04a-prodoruse04a-stagingus-east-04a-[short_name], such asus-east-04a-prodorus-east-04a-staging

To learn more about cluster Audit Policies, see the official Kubernetes documentation.

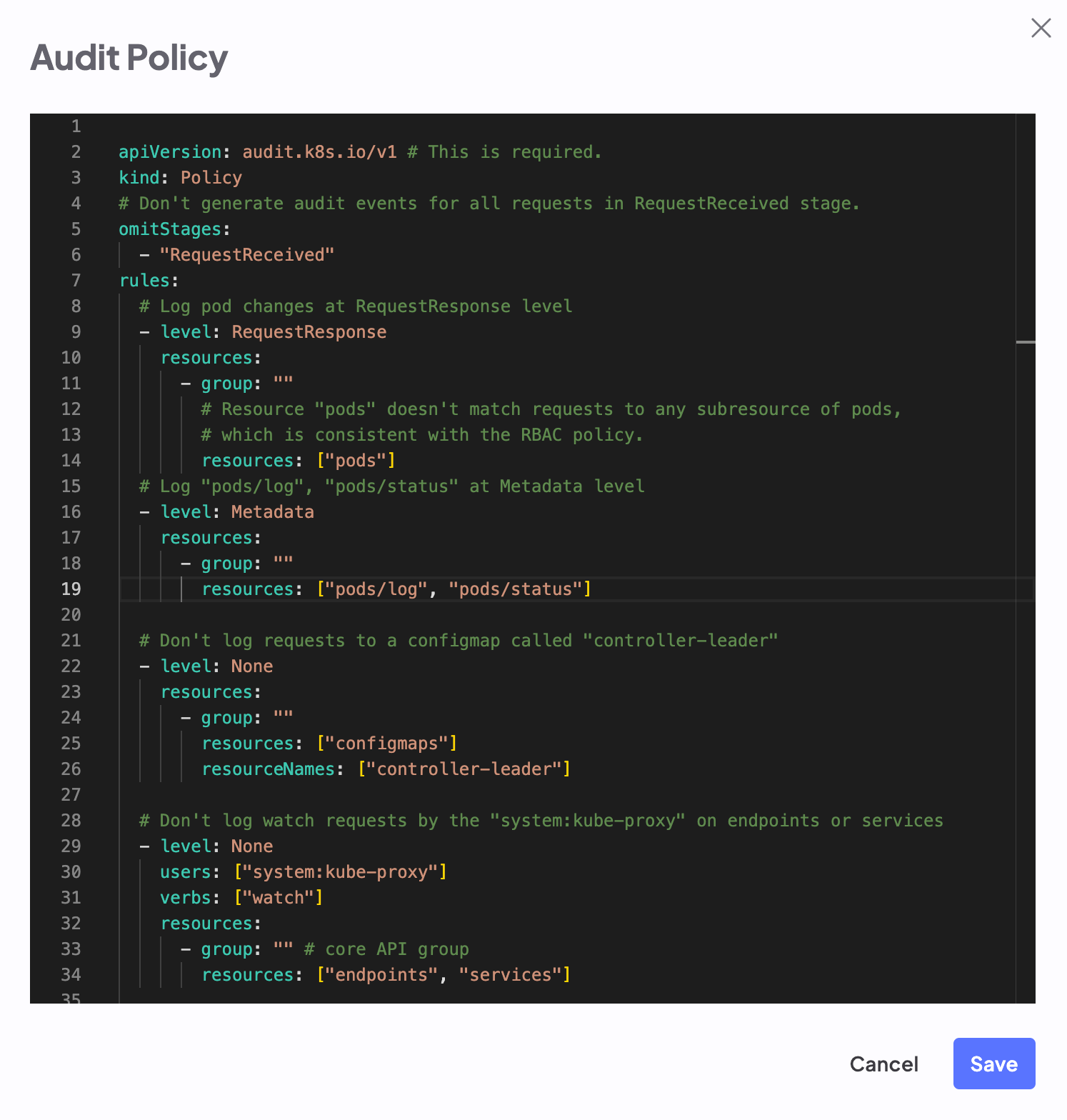

Default cluster audit policy

Default cluster audit policy

audit-policy.yaml

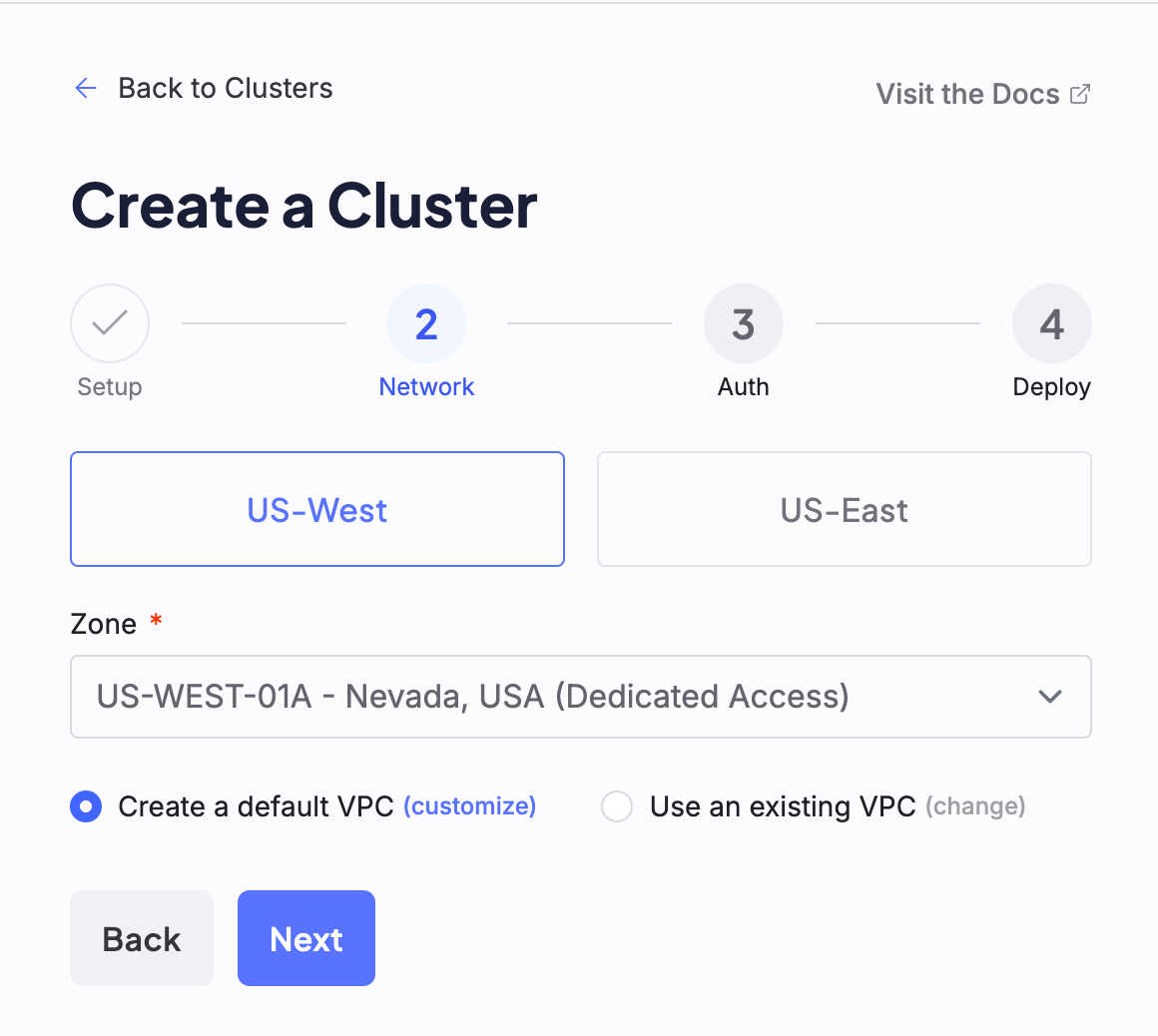

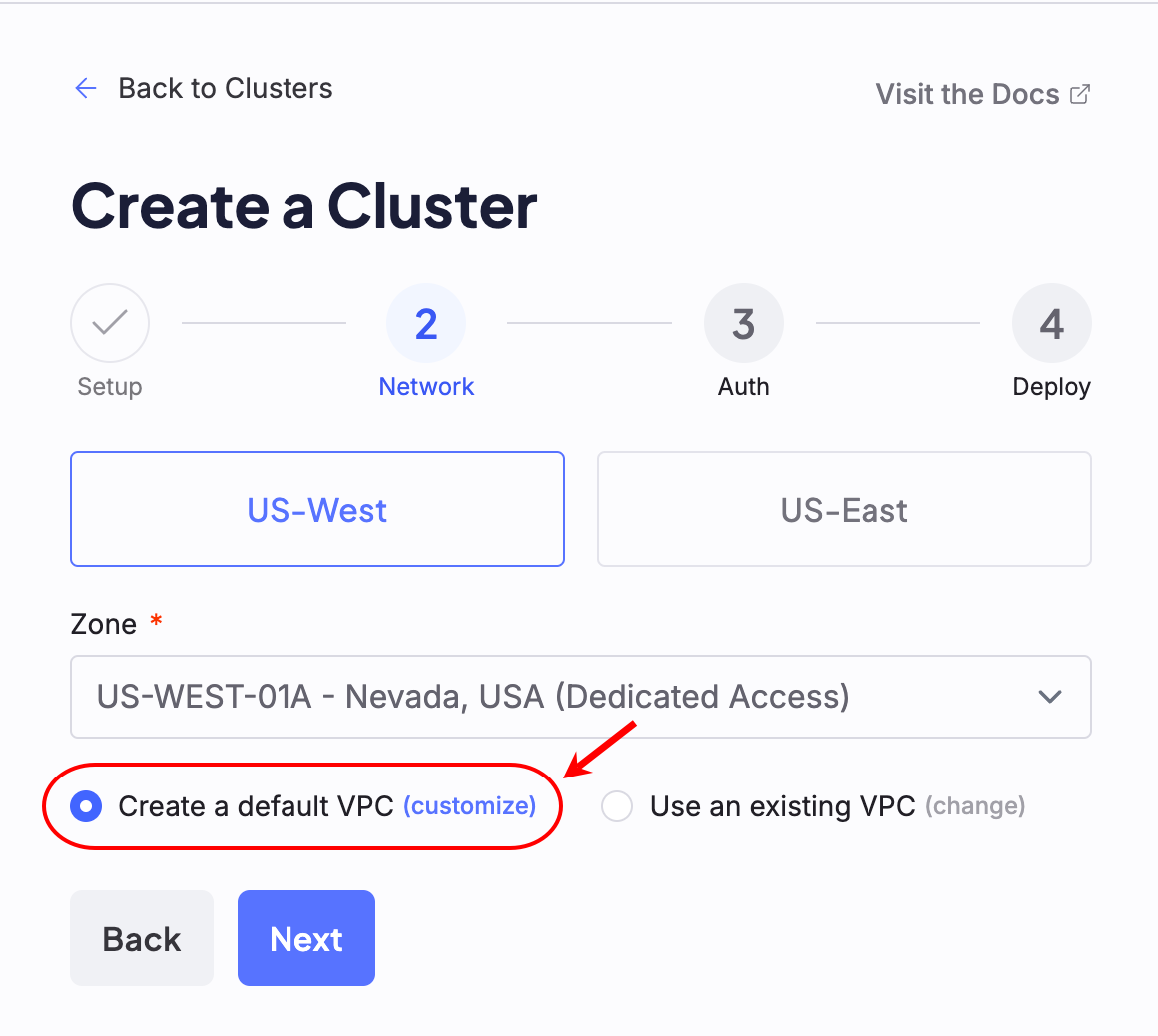

2. Network

Next, configure the network settings for the cluster. On the Network configuration page, first select the Super Region where you’d like to deploy the cluster. Then, select the Zone.Zone availability is subject to capacity.

Select a VPC

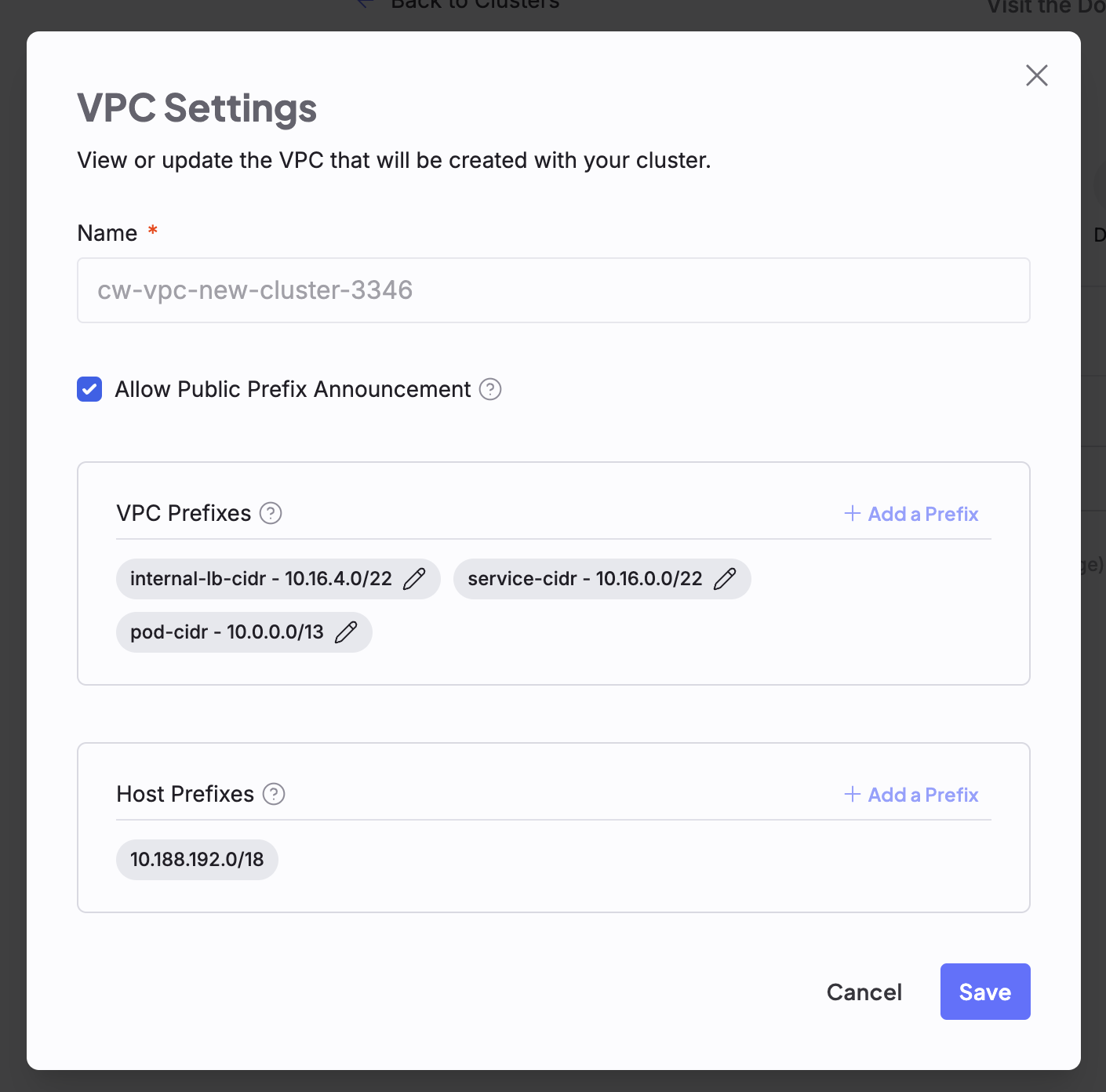

If you have already created a VPC to use with this cluster, select the same Zone where that VPC was deployed. Otherwise, you can either elect to use a default VPC to be created for you during this process, or create a new custom VPC. Default VPCs can still be customized from this page, by clicking the Customize option beside the Create a default VPC radio button.

Each Zone features its own default prefixes, which are used to populate default VPCs. These can be changed. Refer to Regions to see each Zone’s default prefixes.

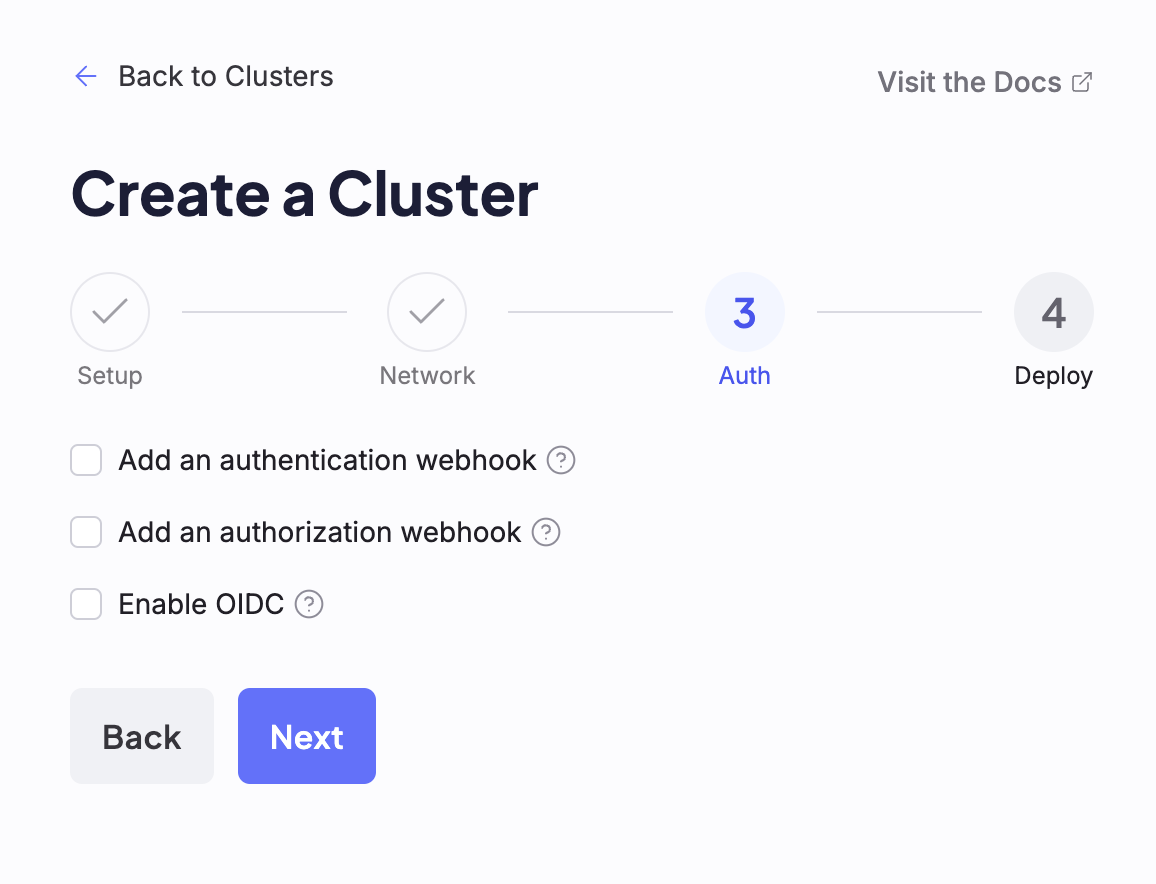

3. Auth

The Auth screen exposes authentication and authorization configuration options, which may be enabled or disabled by toggling them on or off on this screen. Selecting any of these options causes additional configuration fields to appear.

All settings on this screen are optional. If you do not wish to enable any of these features, you may proceed with cluster creation by clicking the Next button, without selecting any options in this step.

Add an authentication webhook

Selecting the Add an authentication webhook checkbox causes the Server and Certificate Authority fields to appear. If this option is selected, you must provide a URL in the Server input field, and may optionally include a Certificate Authority.To learn more about Webhook authentication in Kubernetes, see the official Kubernetes documentation.

Add an authorization webhook

Selecting the Add an authorization webhook checkbox causes the Server and Certificate Authority fields to appear. If this option is selected, you must provide a URL in the Server input field.To learn more about Webhook authorization in Kubernetes, see the official Kubernetes documentation.

Enable OIDC

To enable OIDC, the Issuer URL and Client ID fields are required. All other fields are optional.To learn more about OIDC for Kubernetes, see the official Kubernetes documentation.

Certificate Authority

Each of the authentication and authorization options provides an optional Certificate Authority checkbox. Clicking this box opens a YAML editor, which can be used to input a Certificate Authority X.509 certificate.4. Deploy

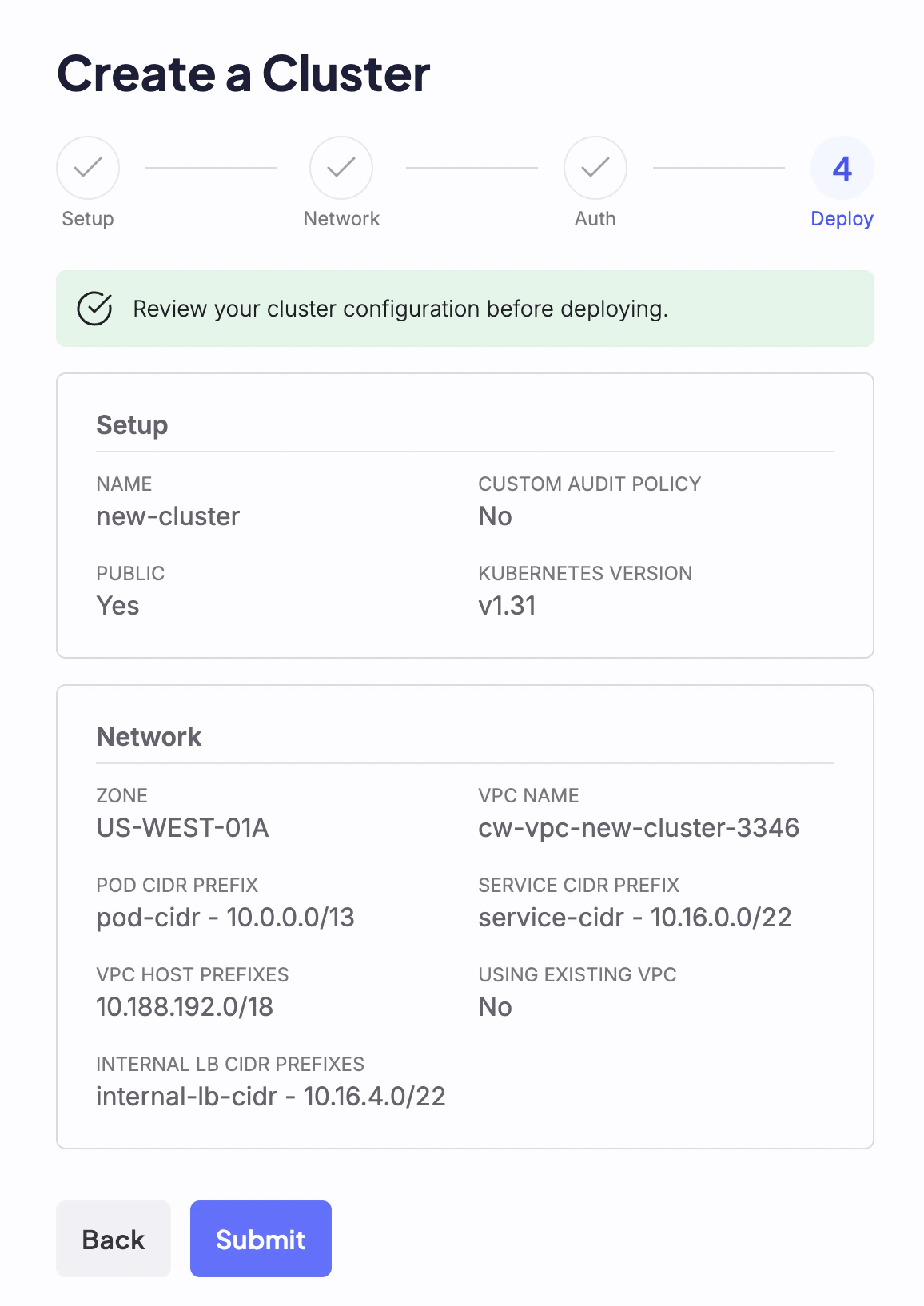

The final step of cluster creation provides an overview of all options selected during the creation process. After reviewing and confirming the cluster’s configuration, click the Submit button to deploy the new cluster.

Creating. When a cluster is ready, its status changes to Healthy. If there are configuration or deployment issues, the cluster’s status changes to Unhealthy.

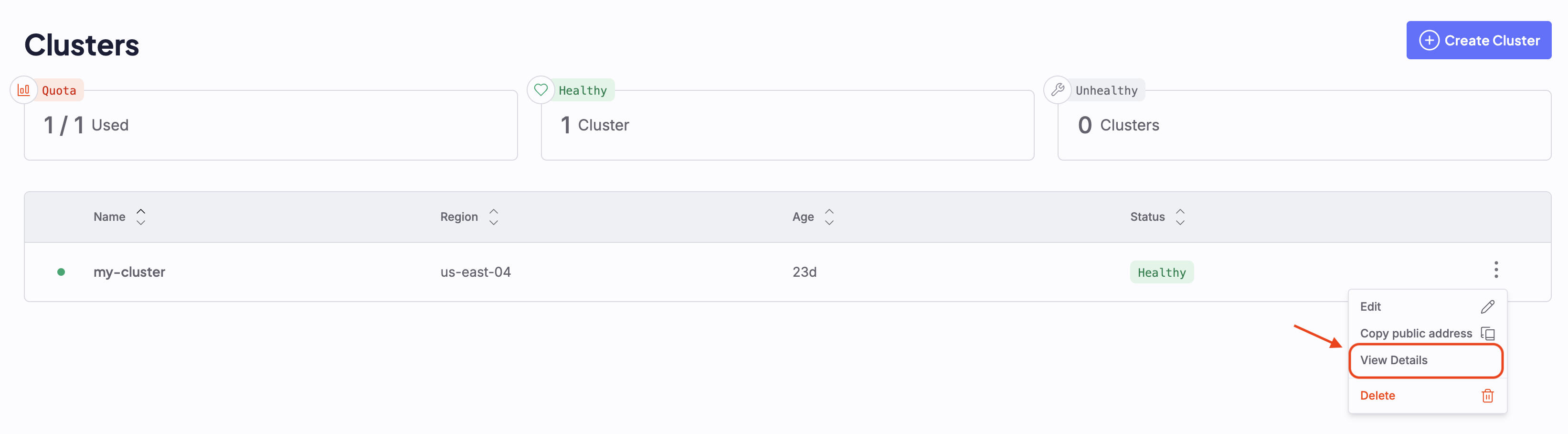

View details of deployed clusters

To view more information about a deployed cluster, click the vertical ellipses menu beside the cluster name and select View Details. This opens the cluster’s current configuration in JSON, as well as information about the cluster’s age, location, name, associated API endpoint, and current state. To return to the dashboard, close this panel.

Cluster statuses

| Name | Description |

|---|---|

| Quota | Displays the number of CKS clusters your organization has deployed, over the maximum limit of clusters it is allowed to create as defined by the organization’s quota. Represented as count/quota. If you have not yet created any clusters, or you have no quota assigned, the status presented is No Quota. |

| Healthy | Displays the number of healthy clusters deployed. In a healthy cluster, all Control Plane elements, servers, and Pods are in a Healthy state. The cluster is stable and responsive, and can manage workloads. |

| Unhealthy | Displays the number of unhealthy clusters. A cluster can become Unhealthy for many reasons, including Control Plane issues, unresponsive Nodes, failing Pods, network failures, or storage problems. |